Lasso方法主要可以解决:多重共线性问题,过拟合问题等,将一部分变量的系数压缩至0,即可以实现变量选择的效果。

Ridge & Lasso Regression

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

%matplotlib inline

from matplotlib.pylab import rcParams

rcParams['figure.figsize'] = 12, 10

import random

1.data:

x = np.array([i*np.pi/180 for i in range(60,300,4)])

np.random.seed(10) #Setting seed for reproducability

y = np.sin(x) + np.random.normal(0,0.15,len(x))

data = pd.DataFrame(np.column_stack([x,y]),columns=['x','y'])

plt.plot(data['x'],data['y'],'.')

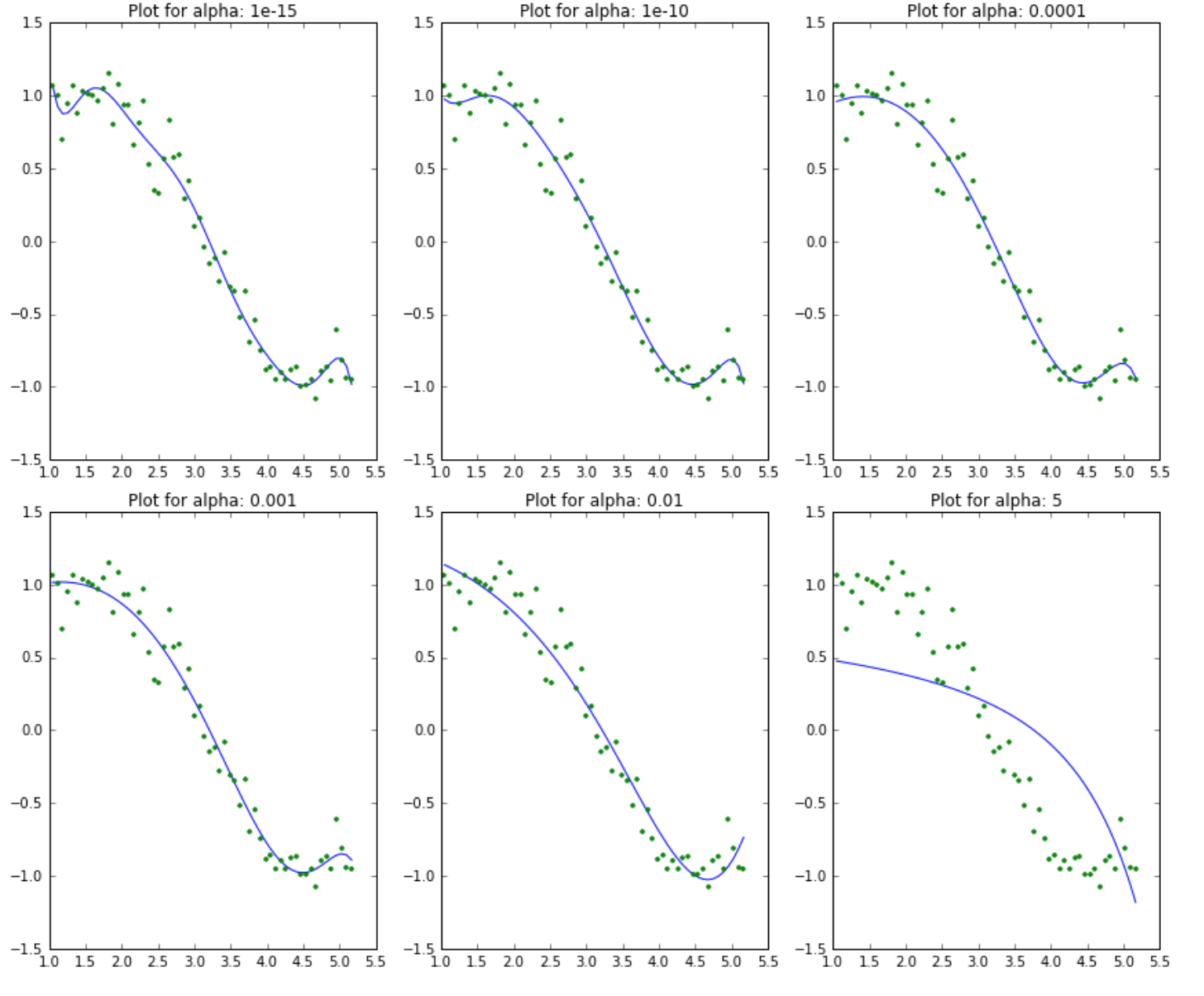

2.Ridge Modeling:

from sklearn.linear_model import Ridge

def ridge_regression(data, predictors, alpha, models_to_plot={}):

#Fit the model

ridgereg = Ridge(alpha=alpha,normalize=True)

ridgereg.fit(data[predictors],data['y'])

y_pred = ridgereg.predict(data[predictors])

#Check if a plot is to be made for the entered alpha

if alpha in models_to_plot:

plt.subplot(models_to_plot[alpha])

plt.tight_layout()

plt.plot(data['x'],y_pred)

plt.plot(data['x'],data['y'],'.')

plt.title('Plot for alpha: %.3g'%alpha)

#Return the result in pre-defined format

rss = sum((y_pred-data['y'])**2)

ret = [rss]

ret.extend([ridgereg.intercept_])

ret.extend(ridgereg.coef_)

return ret

predictors=['x']

predictors.extend(['x_%d'%i for i in range(2,16)])

alpha_ridge = [1e-15, 1e-10, 1e-8, 1e-4, 1e-3,1e-2, 1, 5, 10, 20]

col = ['rss','intercept'] + ['coef_x_%d'%i for i in range(1,16)]

ind = ['alpha_%.2g'%alpha_ridge[i] for i in range(0,10)]

coef_matrix_ridge = pd.DataFrame(index=ind, columns=col)

models_to_plot = {1e-15:231, 1e-10:232, 1e-4:233, 1e-3:234, 1e-2:235, 5:236}

for i in range(10):

coef_matrix_ridge.iloc[i,] = ridge_regression(data, predictors, alpha_ridge[i], models_to_plot)

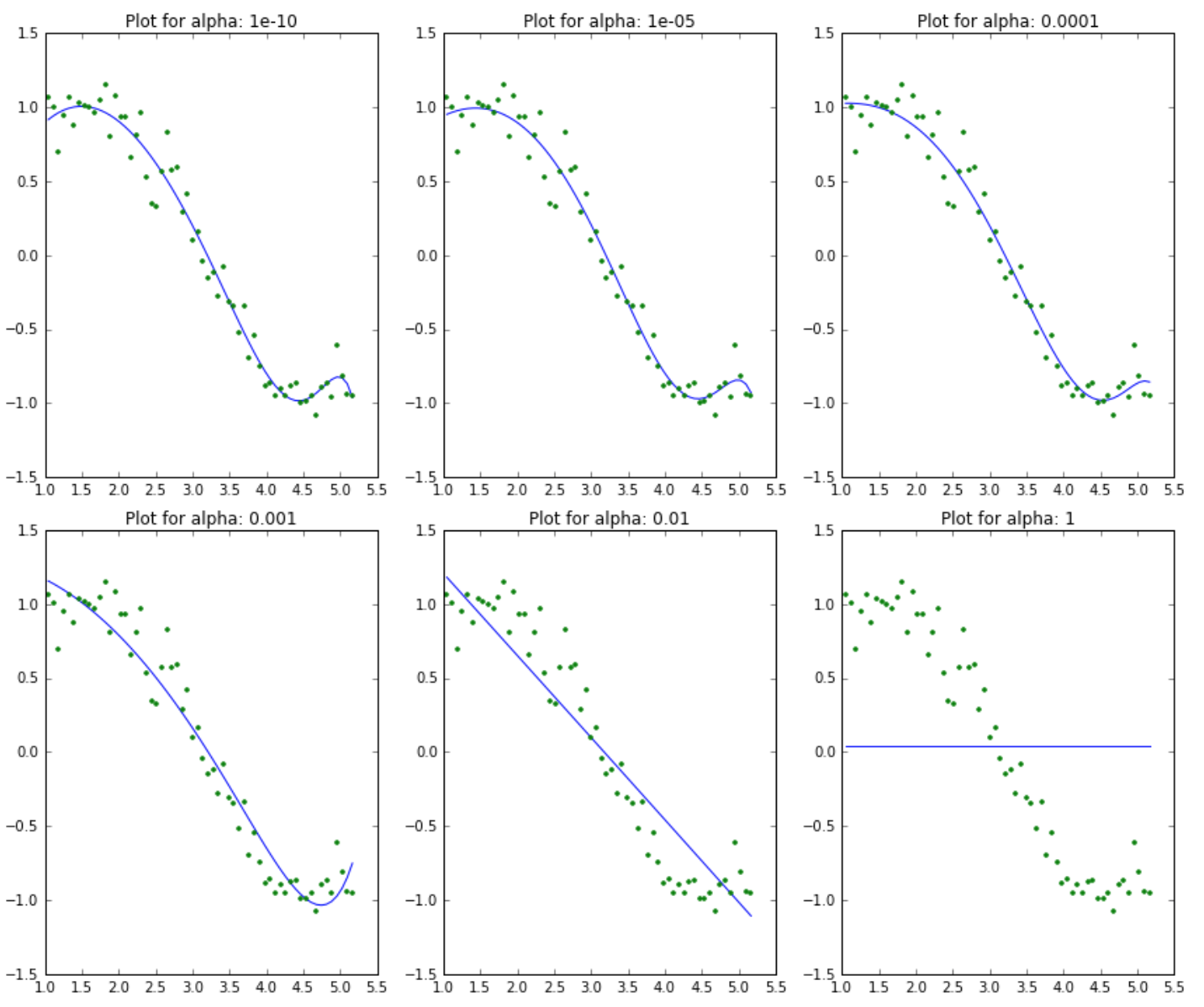

3.Lasso Modeling:

from sklearn.linear_model import Lasso

def lasso_regression(data, predictors, alpha, models_to_plot={}):

#Fit the model

lassoreg = Lasso(alpha=alpha,normalize=True, max_iter=1e6)

lassoreg.fit(data[predictors],data['y'])

y_pred = lassoreg.predict(data[predictors])

#Check if a plot is to be made for the entered alpha

if alpha in models_to_plot:

plt.subplot(models_to_plot[alpha])

plt.tight_layout()

plt.plot(data['x'],y_pred)

plt.plot(data['x'],data['y'],'.')

plt.title('Plot for alpha: %.3g'%alpha)

#Return the result in pre-defined format

rss = sum((y_pred-data['y'])**2)

ret = [rss]

ret.extend([lassoreg.intercept_])

ret.extend(lassoreg.coef_)

return ret

predictors=['x']

predictors.extend(['x_%d'%i for i in range(2,16)])

alpha_lasso = [1e-15, 1e-10, 1e-8, 1e-5,1e-4, 1e-3,1e-2, 1, 5, 10]

col = ['rss','intercept'] + ['coef_x_%d'%i for i in range(1,16)]

ind = ['alpha_%.2g'%alpha_lasso[i] for i in range(0,10)]

coef_matrix_lasso = pd.DataFrame(index=ind, columns=col)

models_to_plot = {1e-10:231, 1e-5:232,1e-4:233, 1e-3:234, 1e-2:235, 1:236}

for i in range(10):

coef_matrix_lasso.iloc[i,] = lasso_regression(data, predictors, alpha_lasso[i], models_to_plot)

example

Estimates Lasso and Elastic-Net regression models on a manually generated sparse signal corrupted with an additive noise. Estimated coefficients are compared with the ground-truth.

"""

========================================

Lasso and Elastic Net for Sparse Signals

========================================

Estimates Lasso and Elastic-Net regression models on a manually generated

sparse signal corrupted with an additive noise. Estimated coefficients are

compared with the ground-truth.

"""

print(__doc__)

import numpy as np

import matplotlib.pyplot as plt

from sklearn.metrics import r2_score

###############################################################################

# generate some sparse data to play with

np.random.seed(42)

n_samples, n_features = 50, 200

X = np.random.randn(n_samples, n_features)

coef = 3 * np.random.randn(n_features)

inds = np.arange(n_features)

np.random.shuffle(inds)

coef[inds[10:]] = 0 # sparsify coef

y = np.dot(X, coef)

# add noise

y += 0.01 * np.random.normal((n_samples,))

# Split data in train set and test set

n_samples = X.shape[0]

X_train, y_train = X[:n_samples / 2], y[:n_samples / 2]

X_test, y_test = X[n_samples / 2:], y[n_samples / 2:]

###############################################################################

# Lasso

from sklearn.linear_model import Lasso

alpha = 0.1

lasso = Lasso(alpha=alpha)

y_pred_lasso = lasso.fit(X_train, y_train).predict(X_test)

r2_score_lasso = r2_score(y_test, y_pred_lasso)

print(lasso)

print("r^2 on test data : %f" % r2_score_lasso)

###############################################################################

# ElasticNet

from sklearn.linear_model import ElasticNet

enet = ElasticNet(alpha=alpha, l1_ratio=0.7)

y_pred_enet = enet.fit(X_train, y_train).predict(X_test)

r2_score_enet = r2_score(y_test, y_pred_enet)

print(enet)

print("r^2 on test data : %f" % r2_score_enet)

plt.plot(enet.coef_, color='lightgreen', linewidth=2,

label='Elastic net coefficients')

plt.plot(lasso.coef_, color='gold', linewidth=2,

label='Lasso coefficients')

plt.plot(coef, '--', color='navy', label='original coefficients')

plt.legend(loc='best')

plt.title("Lasso R^2: %f, Elastic Net R^2: %f"

% (r2_score_lasso, r2_score_enet))

plt.show()

引用

This work is licensed under a CC A-S 4.0 International License.